Difference between revisions of "CH391L/HiddenMarkovModel"

From Marcotte Lab

< CH391L

| Line 7: | Line 7: | ||

As you see, both emission probability and transition probability are given. | As you see, both emission probability and transition probability are given. | ||

| − | <pre>[Emission probability] | + | <pre> |

| + | [Emission probability] | ||

Fair: 1/6 1/6 1/6 1/6 1/6 1/6 | Fair: 1/6 1/6 1/6 1/6 1/6 1/6 | ||

Loaded: 0.1 0.1 0.1 0.1 0.1 0.5 | Loaded: 0.1 0.1 0.1 0.1 0.1 0.5 | ||

| Line 15: | Line 16: | ||

F -> L : 0.05 | F -> L : 0.05 | ||

L -> L : 0.90 | L -> L : 0.90 | ||

| − | L -> F : 0.10</pre> | + | L -> F : 0.10 |

| + | </pre> | ||

---- | ---- | ||

[[Category:CH391L]] | [[Category:CH391L]] | ||

Revision as of 11:27, 19 February 2011

Viterbi Algorithm

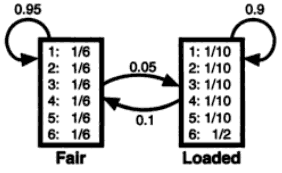

This is an 'dishonest casino' example from Durbin, et al. 'Biological Sequence Analysis' book. A casino used two types of dice: one is a 'fair' one that has equal chance to all six numbers, and the other is a 'loaded' one that has high chance of number 6 than the others. We have a sequence of dice numbers from the casino, and want to estimate when a dealer exchanged the dice.

We assume that the Hidden Markov Model of this example as below:

As you see, both emission probability and transition probability are given.

[Emission probability] Fair: 1/6 1/6 1/6 1/6 1/6 1/6 Loaded: 0.1 0.1 0.1 0.1 0.1 0.5 [Transition probability] F -> F : 0.95 F -> L : 0.05 L -> L : 0.90 L -> F : 0.10